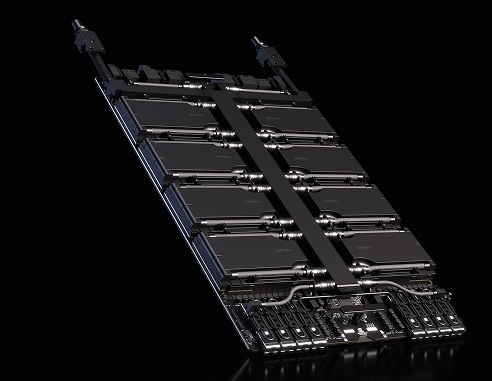

Form Factor

8× NVIDIA Rubin SXM GPUs

Inference Performance (NVFP4)

400 PFLOPS

Training Performance (NVFP4)

280 PFLOPS

FP8 / FP6 Training

140 PFLOPS

INT8 Tensor Core Performance

2 PFLOPS

FP16 / BF16 Tensor Core Performance

32 PFLOPS

TF32 Tensor Core Performance

16 PFLOPS

FP32 Performance

1040 TFLOPS

FP64 / FP64 Tensor Core Performance

264 TFLOPS

Matrix Compute (SGEMM / DGEMM)

3200 TFLOPS | 1600 TFLOPS

Total GPU Memory

2.3 TB

Interconnect Technology

Sixth-Generation NVIDIA NVLink

NVLink Switch

NVLink 6 Switch

GPU-to-GPU Bandwidth

3.6 TB/s

Total NVLink Switch Bandwidth

28.8 TB/s

Networking Bandwidth

1.6 TB/s

Supported GPU Configurations

NVIDIA Rubin, NVIDIA Blackwell, NVIDIA Blackwell Ultra SXM

Primary Workloads

AI Training, AI Inference, Generative AI, Scientific Simulation, HPC Applications

Deployment Environment

AI Factories, Hyperscale Data Centers, Supercomputing Clusters